Overview

Discrete Controller Synthesis (DCS) is a technique that takes a Labeled Transition System (LTS) model of the environment along with safety properties (states to avoid) and liveness properties (goals to achieve), and automatically generates a controller that is mathematically guaranteed to satisfy these properties.

Unlike heuristic approaches, DCS either finds a correct controller if one exists, or proves that no such controller is possible. This formal guarantee is crucial for safety-critical systems.

In our lab, DCS is positioned as the core engine of self-adaptive software, and we advance research in three directions: algorithm efficiency, integration with AI/ML, and diverse real-world applications.

Synthesis Algorithm Research

The state explosion problem is the major challenge in DCS: as the number of states in the environment model grows, the search space expands exponentially. We pursue the following approaches to improve synthesis efficiency.

-

On-the-fly synthesis (KBSE 2025) Explores only the necessary portions of the state space via lazy evaluation, significantly reducing memory usage.

-

Differential synthesis (Hirano et al., ICIEA 2021) When the environment model changes, only the changed portion is re-synthesized, avoiding full recomputation.

-

Pre-controller synthesis (Arioka et al., ICCSCE 2023) A general pre-controller is synthesized offline before deployment, reducing computational cost at runtime.

-

LTS minimization for state explosion mitigation (Yamaguchi et al., KBSE 2025) Merges equivalent states in the LTS to minimize the model before synthesis, reducing the search space.

-

Makespan optimization (Shimizu et al., ICCSCE 2023) Applies DCS to parallel task scheduling to optimize the makespan of the synthesized controller.

-

Action priority-guided search (Takeuchi et al., ICCE-Asia 2024) Incorporates action priority information into the exploration order to accelerate convergence to goal states.

-

ML-based performance prediction (Ikeda et al., ICCE-Asia 2024) Uses machine learning to predict synthesis computational cost, enabling optimized resource allocation.

Integration with AI and Machine Learning

Recent work integrates AI into DCS algorithms to address large-scale problems that are intractable for traditional search-based methods.

-

Reinforcement learning-guided search (Ubukata et al., QRS 2025; SEAMS 2026) An RL agent guides the DCS search process, enabling efficient controller discovery even for state-explosion-prone problems.

-

Mixture-of-Experts RL for robust synthesis (Ubukata et al., SEAMS 2026) A Mixture-of-Experts architecture combining multiple RL policies improves robustness across diverse problem structures.

-

LLM-based stepwise synthesis policy design (Ishimizu et al., ICCE-Asia 2024) Large Language Models (LLMs) are used to iteratively design and refine DCS exploration policies.

-

Automated problem repair via LLM (Ishimizu et al., TOWERS 2025) When synthesis fails, an LLM analyzes the control problem and automatically repairs or reformulates the specification.

Applications

DCS has been applied to a range of systems where formal behavioral guarantees are required.

-

Robot path planning (Li et al., ACSOS 2023 demo) Real-time path planning and obstacle avoidance for autonomous mobile robots using DCS.

-

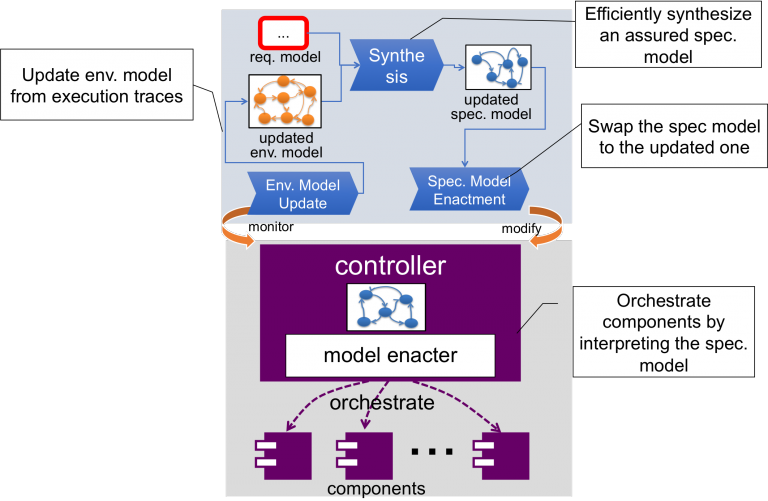

IoT/Node-RED systems (Yamauchi et al., IEEE IoT 2021) Safe automated control of IoT devices via DCS applied to Node-RED workflows.

-

Systems-of-Systems control (Li et al., SESoS@ICSE 2024) Extending DCS to Systems-of-Systems where multiple independent subsystems must cooperate.

-

Cloud-native intrusion recovery (KBSE 2024) After detecting a security breach in a cloud environment, DCS drives automated recovery to a safe state.

Selected Publications

- Chenyu Hu et al. “Adapting Aggregation Rule for Robust Federated Learning under Dynamic Attacks.” SEAMS 2025.

- Toshihide Ubukata et al. “Graph-Contextual Reinforcement Learning for Efficient Exploration in Directed Controller Synthesis.” QRS 2025.

- Toshihide Ubukata et al. “Robust Exploration in Directed Controller Synthesis via Mixture-of-Experts Reinforcement Learning.” SEAMS 2026.

- Tomoki Yamaguchi et al. “LTS Minimization for Scalable Discrete Controller Synthesis.” KBSE 2025.

- Yuki Arioka et al. “Pre-controller Synthesis for Runtime Controller Synthesis.” ICCSCE 2023.

- Yuki Shimizu et al. “Stepwise Comparison for Minimizing Controller Makespan.” ICCSCE 2023.

- Yusei Ishimizu et al. “Towards Efficient Discrete Controller Synthesis: Semantics-Aware Stepwise Policy Design via LLM.” ICCE-Asia 2024.

- Yusei Ishimizu et al. “Automated Problem Repair for Discrete Controller Synthesis via LLM.” TOWERS 2025.

- Takuto Yamauchi et al. “A Development Method for Safety Node-RED Systems using Discrete Controller Synthesis.” The 14th IEEE International Conference on Internet of Things, 2021.

- Jialong Li et al. “Employing Discrete Controller Synthesis for Developing Systems-of-Systems Controllers.” SESoS@ICSE 2024.